Every CMO I talk to right now is living the same contradiction. Output is up. Content volume doubled. The team is shipping more campaigns than ever. And pipeline didn’t move.

The AI tools are working exactly as advertised. They write faster, design faster, segment faster. But the results feel generic. Five teams interpreting the same brief five different ways — now doing it at machine speed. More content, same conversion rate. More outbound, same reply rate. The CEO is asking why the AI investment isn’t showing up in the funnel, and the honest answer is: you don’t know.

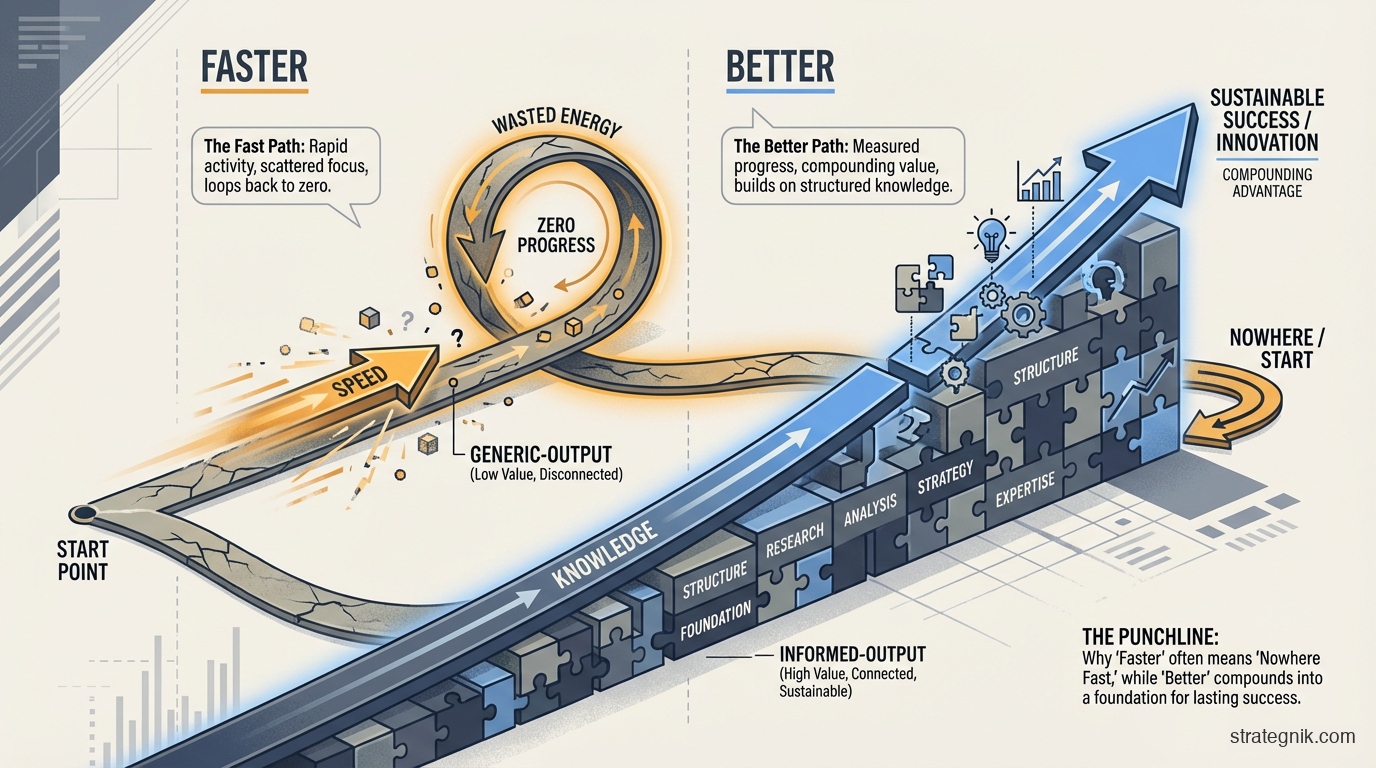

I’ve had this conversation with enough GTM leaders this year to see the pattern. The tools aren’t broken. They’re fast. But fast and good are different things, and most teams are getting one without the other.

Here’s the diagnosis.

Fast is easy. Better is the hard part.

AI gave your team speed for free. Any model can write a first draft, generate ad variants, summarize a call, or build a campaign brief in minutes. The speed is real. It’s also table stakes — every one of your competitors has access to the same models.

The problem is what the speed runs on. When your content writer uses AI to draft a blog post, what does the model actually know about your positioning? When your demand gen team uses AI to write email sequences, what does it know about your ICP — the real one, not the paragraph in a deck from 2024? When your SDRs use AI for outbound, what does it know about how you win against specific competitors?

The answer, for most teams, is: not much. Each tool gets whatever context someone remembers to paste into a prompt. The content tool gets one version of the ICP. The email tool gets another. The sales enablement tool gets a third. None of them are wrong, exactly. They’re just different — because nobody encoded the canonical version in a form every tool can read.

So you get speed without coherence. Volume without precision. Every tool is producing at full velocity, but they’re all pointed in slightly different directions. Multiply that across a quarter and you get exactly what the numbers show: more output, same pipeline.

Faster was never the bottleneck. Understanding was.

What AI engineering learned the hard way

The same thing just happened one layer below you, in the world of AI engineering. It matters because these patterns always migrate upward into the tools marketers buy.

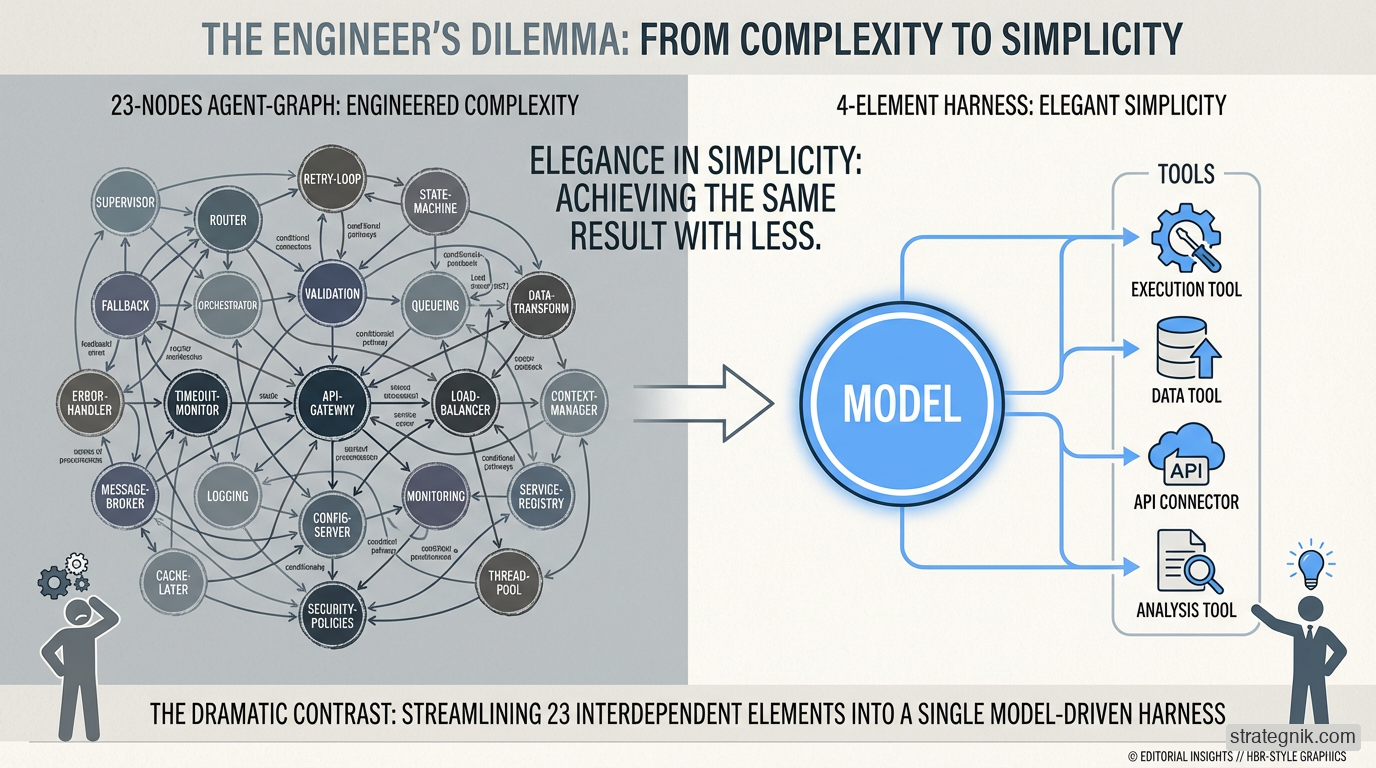

Two years ago, AI models were powerful but unreliable. They’d lose track of multi-step tasks. They’d make errors and couldn’t recover. So engineers built elaborate management systems around them — frameworks that told the AI exactly what to do at every step, because the AI couldn’t be trusted to figure it out. Think of it as a twenty-three-box flowchart for every task: go here if the customer says X, go there if the email bounces, check with a human before sending anything over $10K.

The frameworks worked. But they solved for a model that was dumb.

When models got smarter — capable of holding plans, recovering from errors, knowing when to use which tool — the flowcharts started getting in the way. The AI wanted to take a shortcut to the answer, but the framework forced it through twelve predetermined steps. The scaffolding that once made the model useful was now making it worse.

Anthropic — the company behind Claude — put the principle plainly: every component in an AI system encodes an assumption about what the model can’t do on its own. Those assumptions go stale as models improve. Scaffolding accumulates. Then, eventually, it comes down.

The best engineering teams stripped the scaffolding and discovered something: the model didn’t just need fewer instructions. It needed better knowledge. A smart model with access to your files is not a smart model that understands your business. Give it a thousand documents and ask a question, and it will search, guess, and fill in the gaps with plausible-sounding fiction.

The AI engineering world has a name for the fix: ontology. In plain terms, it’s a structured model of your domain — what things exist, what their properties are, and how they relate to each other. Instead of the AI searching through documents and hoping to find the answer, it queries a knowledge model it can actually reason over. Think of it as the difference between handing someone a filing cabinet and handing them an org chart with a glossary.

That’s the lesson: faster doesn’t come from more scaffolding. Better comes from structured knowledge. And it applies directly to your GTM.

Where your GTM knowledge actually lives

I’ve been writing about the Physics of Marketing for a while. The short version: GTM systems behave like physical systems. Mass, surface area, velocity, escape velocity. Light systems produce erratic results. Heavy systems dampen variance and compound over time.

Mass, in GTM terms, is the aggregated gravitational weight of your brand and product within the digital ecosystem. It’s your combined authority across every place where someone can interact with your product, your brand, or the content meant to inform people about either one. Search results, community threads, review sites, documentation, social presence, earned media, partner mentions, analyst coverage — all of it. Mass is the pure weight of total influence a product exerts on its market across time and space.

This mass is what turns “fast” into “better.” Without it, speed just amplifies noise. With it, every piece of content, every campaign, every touchpoint compounds against an existing gravitational field that pulls buyers toward you.

Most B2B marketing organizations misunderstand where mass comes from. They think it’s internal — the ICP deck, the positioning doc, the competitive battlecard. Those are important, but they’re not mass. They’re instructions. Mass is what the market actually encounters. It’s the aggregate footprint your brand occupies in the ecosystem — measurable, indexable, and increasingly quantifiable in ways that look a lot like file size: the total weight of your digital presence as it exists in the real world.

The context field is what connects internal knowledge to external mass. It acts as a communication layer that cushions your brand’s relevance into related and adjacent topics — correlating context and relevance so that your authority extends beyond the obvious keywords and into the spaces where your buyers are actually forming opinions. Without it, your internal knowledge stays internal, and your external mass grows randomly. With it, every asset you produce is informed by what you know and positioned to increase the gravitational pull of what the market sees.

This is why the output went up and pipeline didn’t move. Without the context field, humans have to be the filter for everything that goes to market — reviewing, correcting, re-contextualizing every piece of AI output before it ships. That’s the bottleneck. Not the production. The approval. Every asset waits for a person to decide whether it’s on-brand, on-message, and aimed at the right part of the ecosystem. The tools can produce autonomously, but without the field, they can’t place autonomously. So you get machine-speed production throttled by human-speed judgment, and the pipeline reflects exactly that constraint.

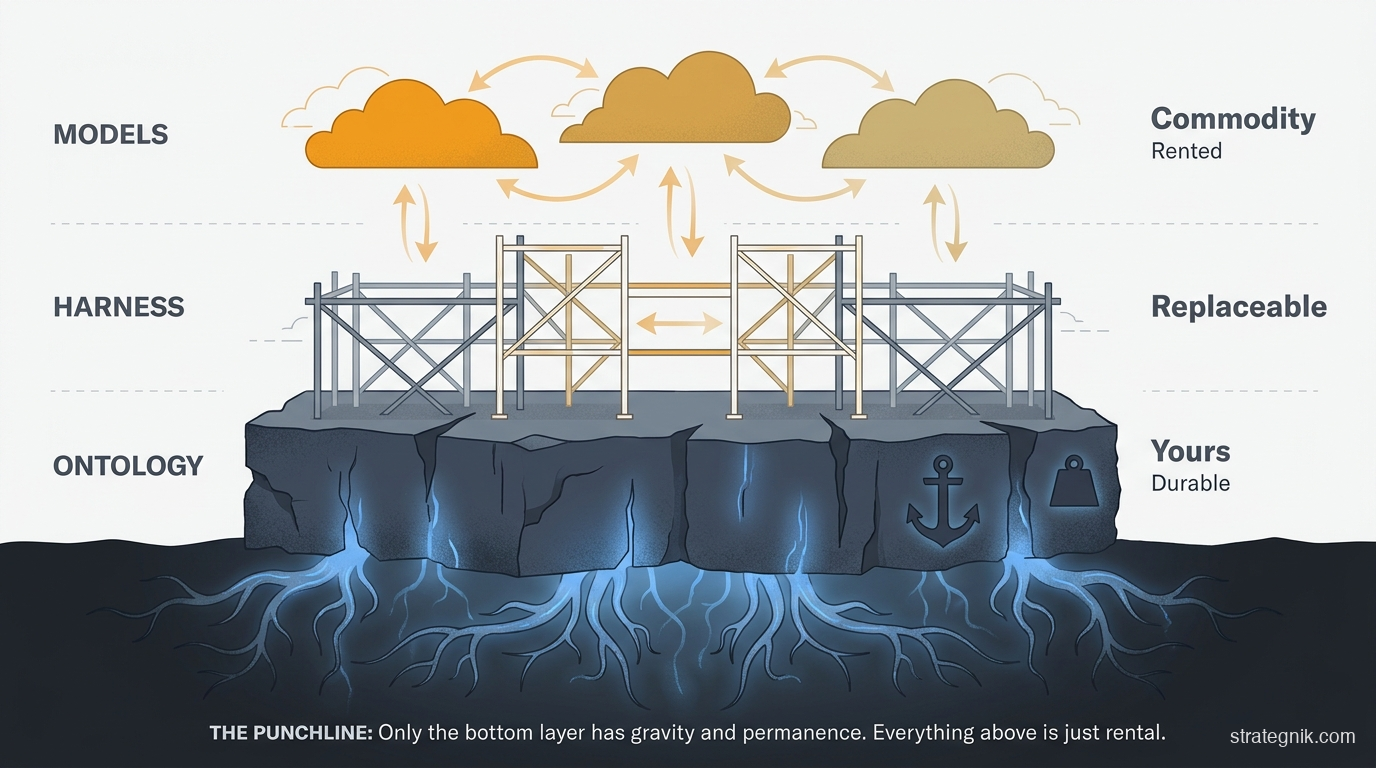

What’s yours and what’s rented

Here’s where the investment question gets sharp.

Everyone has access to the same frontier models. GPT, Claude, Gemini — they’re rented, they’re swappable, they get replaced every twelve months. That’s commodity, and the cost is dropping roughly 4x per year.

Everyone can build the wiring around those models — the agent frameworks, the tool integrations, the automation layer. The tooling gap between “sophisticated AI team” and “competent AI team” is closing fast. That’s commodity too.

What isn’t commodity — what cannot be bought, rented, or copied — is your structured domain knowledge. The representation of how your company understands its market, its buyers, its competitors, its message, its metrics — organized so that any AI tool can query it and get a consistent, authoritative answer. That has to be built. It has to be maintained. And it only works for you.

This is the layer that turns fast into better. And it’s the layer most teams skip, because building knowledge infrastructure isn’t as visible as launching another AI-powered campaign.

Every dollar your team spends on a new framework, a new agent builder, a new AI-native marketing point solution, is a dollar you could have spent building knowledge that compounds. The framework will be commodity in a year. Your structured understanding of how you win will still be working for you in five.

What “better” looks like in practice

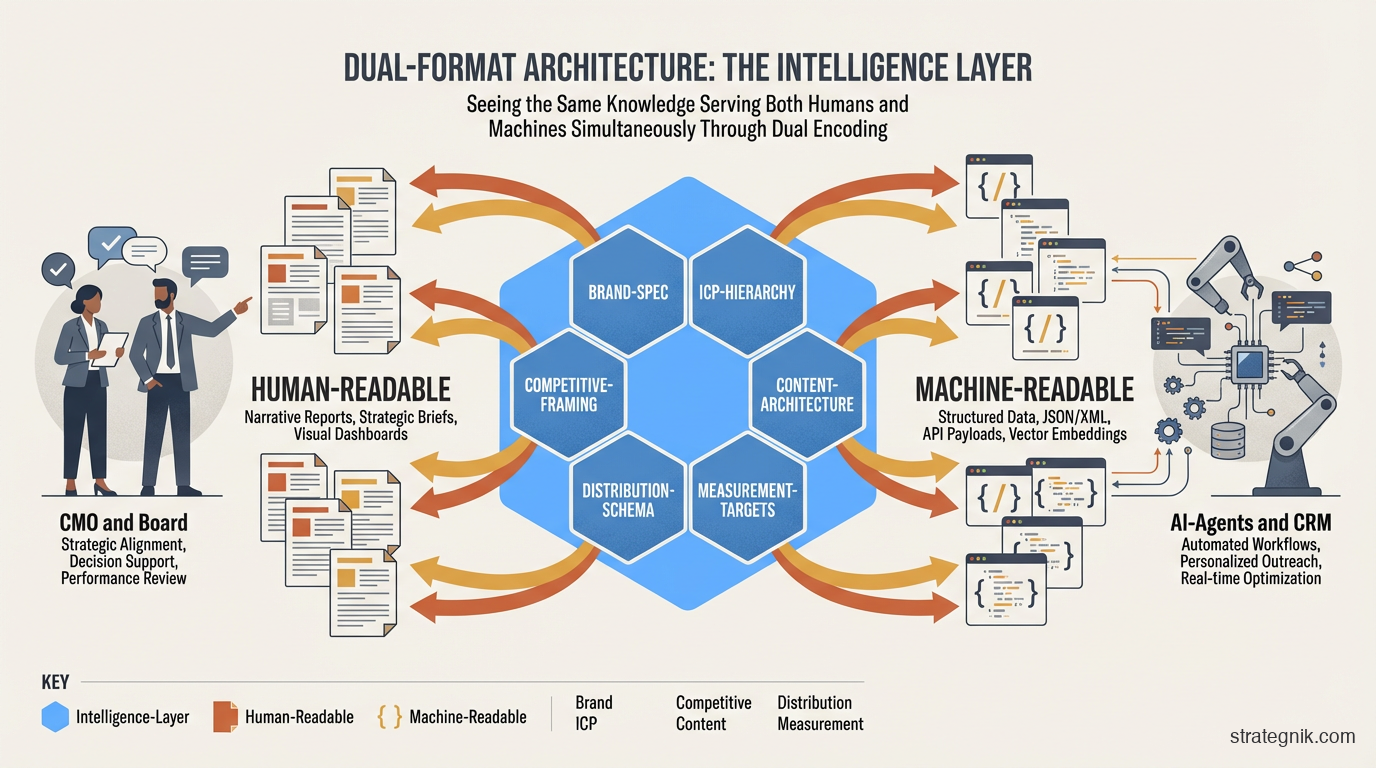

This is what I’ve been building with clients under the name Context Layer. I didn’t start by calling it an ontology — I called it a machine-readable operating context. Same idea, different vocabulary. The point is the same: encode what your company knows so that every tool in your stack operates from the same foundation.

Six components. Brand spec. ICP hierarchy. Competitive framing. Content architecture. Distribution schema. Measurement targets. Each one captured in two forms: a human-readable narrative that the CMO presents to the board, and a structured, machine-readable specification that every AI tool in the stack can query and act on without asking a human to re-explain it.

Here’s what that looks like in practice.

The ICP isn’t a paragraph in a deck that someone pastes into a prompt. It’s a structured taxonomy: which companies qualify, which don’t, what triggers a score change, what disqualifies. Exportable to the CRM, the enrichment stack, and the agent prompts in the same format. Every tool in your stack scores the same lead the same way because they’re all reading from the same source. That’s how you go from “fast outbound” to “outbound that converts.”

The competitive framing isn’t a PDF battlecard. It’s a structured knowledge base — competitor, claim, evidence, counter, proof point — organized so that a sales agent can query it for objection handling, a content system can cite it for comparison pages, and an answer-engine optimization pipeline can structure it into schema markup for AI search. When a new competitor emerges, you extend the knowledge base. You don’t rewrite thirty battlecards. That’s how you go from “fast content” to “content that positions.”

The measurement targets aren’t a dashboard someone built in Looker. They’re metric definitions with formulas, data source bindings, and alert thresholds — readable by humans and by agents alike. Every dashboard, every standup, every QBR runs on the same numbers with the same definitions because the definitions live in one place. That’s how you go from “fast reporting” to “reporting you can act on.”

This is the layer that makes the difference. Not more tools. Not more scaffolding. A shared foundation that turns fifty disconnected agents into a coordinated system.

Two things to change this quarter

First, stop evaluating AI-native marketing tools as features. Evaluate them as load on your knowledge model. If a tool requires you to re-explain your ICP, your voice, your competitive positioning, your metrics — in its own format, in its own way, from scratch — it’s adding scaffolding, not knowledge. It will make you faster without making you better. The tools that will survive the next two years are the ones that consume a structured context you own and bring back structured output you can integrate. If the tool can’t do that, it’s a transient phase.

Second, if you’re the CMO or the founder deciding between hiring a marketing leader and investing in an AI-native system, the question isn’t which one to pick. The question is whether either one is going to produce the structured knowledge — or just consume it. A CMO hire who will write down how the company understands its market, its buyers, and its wins, in a form the rest of the stack can operate from, is worth the salary. A CMO hire who will keep all that in their head and leave in eighteen months is just another form of scaffolding.

The models are rented. The frameworks are commodity. The only thing in your AI stack that actually makes you better — not just faster — is the structured knowledge of how your company wins. Build that, and everything you bolt on top compounds. Skip it, and every tool you buy makes you faster at going nowhere.