Your marketing team has AI access. They’re writing better emails. Maybe better landing pages. Maybe better sales outreach.

That’s not transformation. That’s a typing upgrade.

I’ve watched this pattern at a dozen companies over the past year. The CEO reads the BCG report on agentic marketing. The CMO rolls out ChatGPT Enterprise. The team starts prompting. Output goes up. Quality stays flat. Pipeline doesn’t move. Six months later, the same headcount is doing the same work slightly faster, and the CEO is wondering why the AI investment didn’t change anything.

The reason is structural. And it maps to the same physics that governs every other GTM problem.

The Control Degradation Problem

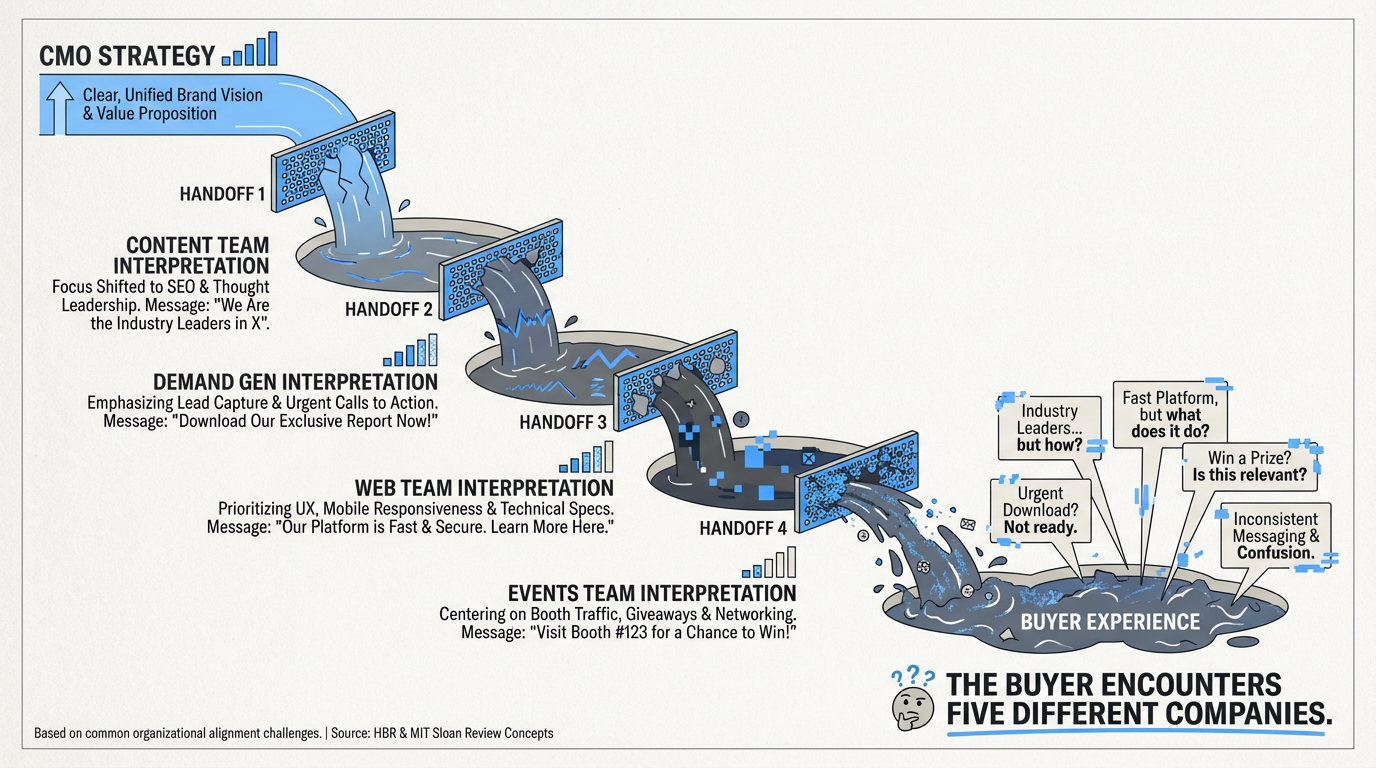

In a typical 20-person marketing org, strategy enters at the top and degrades at every handoff on the way to the market. The CMO defines positioning, ICP, and messaging. The content team interprets the brief one way. Demand gen interprets it differently. Web does its own thing. Events uses whatever deck was built last quarter. Marketing ops is tracking metrics that don’t match the goals.

The buyer encounters five different companies. Brand dilutes. Pipeline slows. Conversion drops. Sales cycles lengthen.

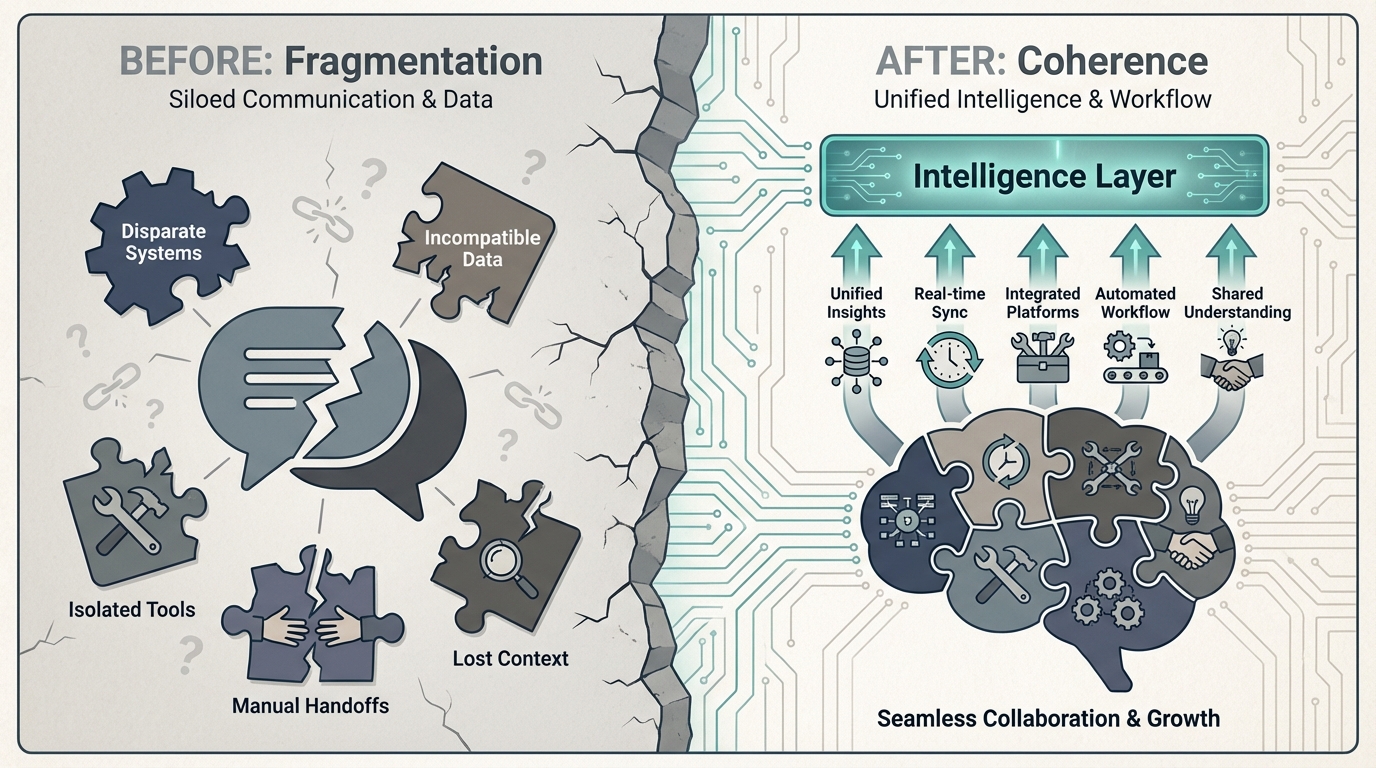

This is a friction problem — the resistance that eats momentum, compounding at every handoff in the system. In the Physics of Growth framework, friction is the force that eats momentum. And the organizational structure of most marketing teams is a friction machine.

Now add AI to that system. What happens?

AI amplifies output, not quality. More content produced faster from a fragmented system means more fragmented content at higher velocity. Five different voices become five louder voices. Agents prompted without shared context start from zero every time — no brand memory, no ICP knowledge, no competitive framing. The agent is a fast intern with no institutional knowledge.

A chatbot trained on fragmented context produces fragmented output — faster.

The Wrong Question and the Right One

Most companies ask: “How do we use AI in marketing?”

This is the wrong question. It focuses on tools and access. It generates activity, not architecture. It leaves the foundation unbuilt.

The right question is: What does our AI actually know about us — and is that enough to act on?

Ask your team that question. Ask them what happens when they prompt an AI tool to write a competitive email sequence. Does the tool know your ICP’s pain hierarchy? Does it know which competitor the prospect evaluated last? Does it know your brand voice well enough to produce something you’d send without editing every line?

If the answer is no — and for most companies it is — then you don’t have an AI strategy. You have ChatGPT access.

What an Operating System Actually Looks Like

The difference between “using AI” and “being powered by AI” is a layer of infrastructure I call the Context Layer — the encoded operating context that makes every AI tool, every agent, and every team member work from the same shared foundation.

It’s not a document people forget to check. It’s a system every tool references automatically. It’s not a prompt template for one use case. It’s persistent context that informs all outputs. It’s not a one-time brand exercise. It’s a living operating spec that updates as the market moves.

The Context Layer has six components: brand and voice specification, ICP and buyer context, competitive framing, content architecture, machine readability and distribution schema, and measurement targets. Each one is encoded in machine-readable format — not a PDF on a shared drive, but structured context that agents consume autonomously.

But the field serves two audiences. The obvious one is your internal agents and team — giving them the context to produce on-brand, on-strategy output. The less obvious one is every external machine your buyer consults. ChatGPT, Perplexity, Google AI Overviews — these systems are now the first touch in B2B research. If your content isn’t structured for LLM citation, you don’t exist in that buyer’s process. The Context Layer doesn’t just make your AI tools work better. It makes your company legible to every machine your buyer consults.

When the layer exists, the entire marketing function changes character. Content isn’t “write me a blog post about X.” It’s an agent generating from a keyword brief, brand spec, and ICP context — autonomously. Demand gen isn’t “draft this email sequence.” It’s signals triggering nurture flows without manual prompting. ABM isn’t “personalize this one-pager for Acme.” It’s account variants deployed at scale, on signal, in real time. And every page ships with structured data, entity definitions, and passage-level answer blocks that make it citable by AI from day one.

The difference is not better prompting. It is a shared context that every tool operates from.

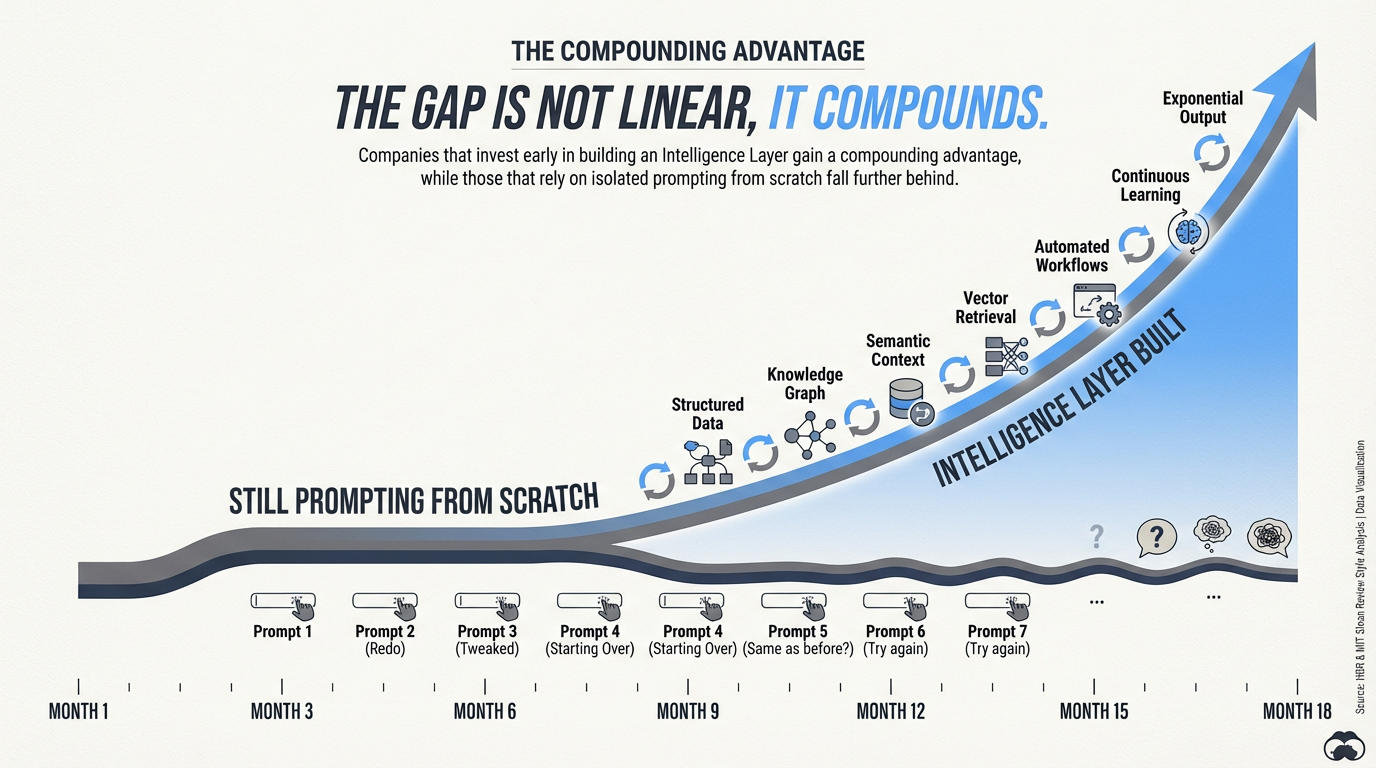

The Physics of Why This Matters Now

In Market Physics, momentum compounds. The companies that build the Context Layer first don’t just get a head start — they get a compounding advantage that widens over time. Every piece of content produced from shared context reinforces brand coherence. Every signal-driven campaign generates data that feeds back into the layer. Every month the layer runs, it gets sharper.

The companies that wait are still prompting ChatGPT from scratch. Still producing fragmented output. Still hiring to scale what architecture should handle.

The market data supports the urgency. Gartner projects 60% of brands will use agentic AI for one-to-one interactions by 2028. BCG expects agentic AI to handle over 20% of marketing’s workload within two to three years. Accenture has already deployed 14 AI agents across their 600-person marketing team — reducing campaign steps from 135 to 85 with 25-55% speed-to-market improvement. Vercel replaced 9 of 10 inbound SDRs with a single AI agent in six weeks. Customer support hiring is down 65% in two years. The displacement is not theoretical.

But McKinsey’s data reveals the gap: while 50% of CMOs rank generative AI as a top-three investment, it ranked 17th of 20 in actual execution priorities. Everyone says it matters. Almost nobody is doing it. The challenges, McKinsey notes, are experiential rather than technical — underscoring the need for superior context engineering.

The 39-point gap between “experimenting with AI agents” (62% of companies) and “scaling them” (23%) is exactly where the Context Layer operates. It’s the infrastructure that turns experimentation into a system. AI-native B2B companies are now running eight-figure businesses with 3 human employees and 20+ AI agents, spending an average of 23% of revenue on inference costs rather than headcount. The Context Layer is the operating context that makes that model possible.

The Escape Velocity Question

Every CEO at a Series B through pre-IPO company is asking some version of the same questions: Should I hire a Head of Marketing or build an AI-native system? My team has AI tools but nothing feels different — why? How many marketers do I actually need? What does marketing look like in 18 months?

They can picture the problem. They cannot picture the solution.

The Context Layer makes the solution visible. It’s the thing that sits between your brand and the AI agents that run your go-to-market. Not AI strategy — that’s too vague. Not marketing automation — that’s last decade’s category. Not a chatbot upgrade. The infrastructure that makes all of those things actually work.

Before you hire, build, or buy — know what you’re actually missing.

See all 25 Context Field plays in action → Playbook Catalog